Feature Views

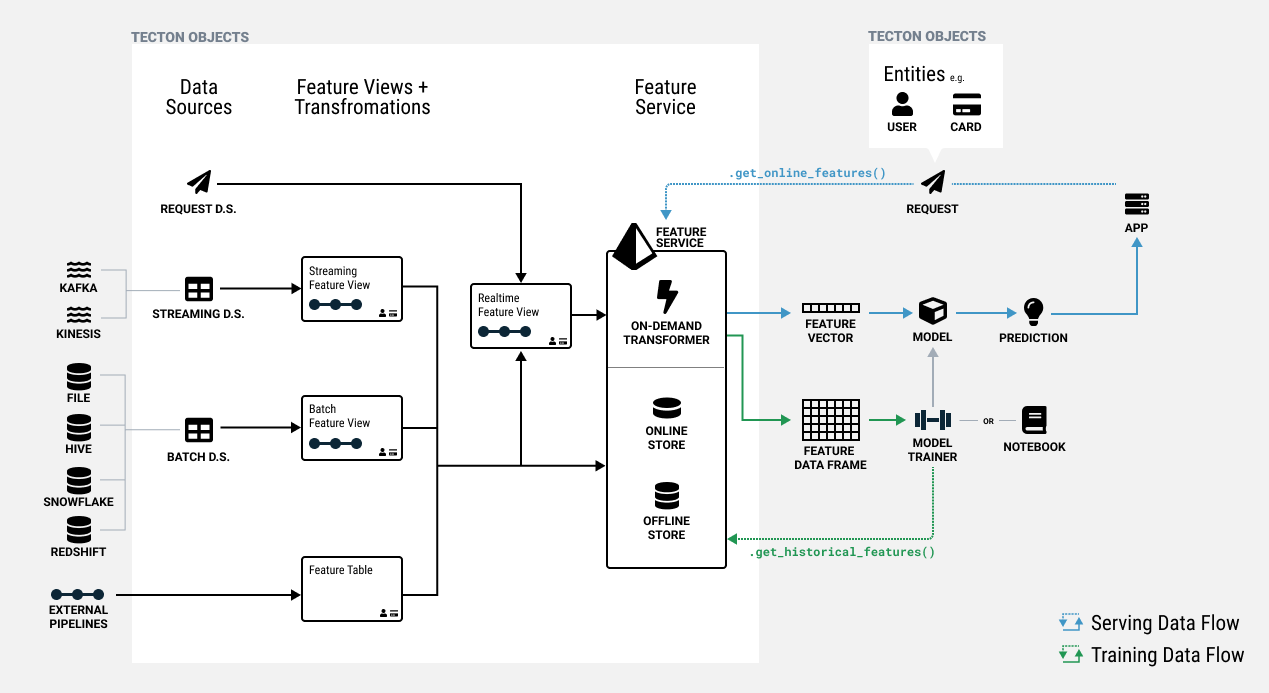

In Tecton, features are defined as a view on registered Data Sources or other Feature Views. Feature Views are the core abstraction that enables:

- Using one feature definition for both training and serving.

- Reusing features across models.

- Managing feature lineage and versioning.

- Orchestrating compute and storage of features.

A Feature View contains all information required to manage one or more related features, including:

- Transformation: A Feature View takes in one or more input sources and runs transformations to compute features. Sources can be Tecton Data Sources or in some cases, other Feature Views.

- Entities: The business entities that the features are attributes of such as Customer or Product. The entities dictate the join keys for the Feature View.

- Configuration: Materialization configuration for defining the orchestration and serving of features, as well as monitoring configuration.

- Metadata: Optional metadata about the features used for organization and discovery. This can include things like descriptions and tags.

The transformation and entities for a Feature View define the semantics of what the feature values truly represent. Changes to a Feature View's transformation or entities are therefore considered destructive and will result in the rematerialization of feature values.

Concept: Feature Views in a Feature Store

Types of Feature Views

There are 3 types of Feature Views:

- Batch Feature Views run transformations on one or more Batch Sources and can materialize feature data to the Online and/or Offline Feature Store on a schedule.

- Stream Feature Views transform features in near-real-time against a Stream Source, or Push Data Source, and can materialize data to the Online and Offline Feature Store.

- Realtime Feature Views run transformations at request time based on data from a Request Source, Batch Feature View, or Stream Feature View.

Defining a Feature View

A Feature View is defined using a decorator over a function that represents a pipeline of Transformations.

Here is an example of a Batch Feature View definition. See the individual Feature View type sections for more details and examples.

@batch_feature_view(

sources=[transactions_batch],

entities=[user],

mode="pandas",

aggregation_interval=timedelta(days=1),

timestamp_field="timestamp",

features=[

Aggregate(

input_column=Field("transaction", Int64),

function="count",

time_window=TimeWindow(window_size=timedelta(days=1)),

),

Aggregate(

input_column=Field("transaction", Int64),

function="count",

time_window=TimeWindow(window_size=timedelta(days=30)),

),

Aggregate(

input_column=Field("transaction", Int64),

function="count",

time_window=TimeWindow(window_size=timedelta(days=90)),

),

],

online=True,

offline=True,

feature_start_time=datetime(2022, 5, 1),

description="User transaction totals over a series of time windows, updated daily.",

)

def user_transaction_counts(transactions):

transactions["transaction"] = 1

return transactions[["user_id", "transaction", "timestamp"]]

See the API reference for the specific parameters available for each type of Feature View.

Work with Feature Views

Working with Feature Views involves selecting appropriate transformation types and compute engines, configuring materialization settings, and integrating with Feature Services for serving.

Feature Naming Requirements

When defining features in Feature Views, feature names must follow specific naming constraints to ensure compatibility with Tecton's validation system.

Naming Rules:

- Feature names must contain only letters (a-z, A-Z)

- Numbers (0-9) are allowed

- Single underscores (_) are allowed as separators

- No other special characters, spaces, or consecutive underscores are permitted

Example of valid feature names:

user_transaction_countamount_avg_7dscore123

Example of invalid feature names:

user-transaction-count(hyphens not allowed)amount__avg(consecutive underscores not allowed)user transaction count(spaces not allowed)

If you use invalid feature names, you will encounter validation errors similar to:

TectonValidationError: BatchFeatureView 'feature_view' failed validation: Invalid feature name override 'user-transaction-count' needs to contain only letters and numbers (optionally separated by a single underscore).

Note that feature name length can have an impact on the number of features a Feature View can contain. Feature Views should contain under approximately 500 features, though the actual limit may be lower depending on column name lengths and feature contents. For Feature Views with very large numbers of features, consider splitting them into multiple smaller Feature Views to prevent potential errors.

Feature View Compute Engines and Transformations

Every Feature View has a transformation type and associated

Compute Engine, controlled by the Feature

View's mode parameter.

Certain transformations are only compatible with specific Feature Views types due to the nature of the compute engines leveraged. The table below shows what transformation types and associated compute engines (e.g. Rift or Spark) are supported for each Feature View.

| Name | Rift | Spark |

|---|---|---|

| Batch Feature View | pandas, snowflake_sql, bigquery_sql, or python | spark_sql or pyspark |

| Stream Feature View | pandas or python | spark_sql or pyspark |

| Realtime Feature View | pandas or python | N/A |

When both Rift and Spark are enabled on the same cluster, Tecton selects the compute engine to use for specific Feature Views based on the Feature View's transformation type.

When using Python mode, the transformation will take dictionaries as inputs and is expected to output a dictionary.

When using Pandas mode, the transformation will take Pandas DataFrames as inputs and is expected to output a Pandas DataFrame.

Python transformations are faster in online environments because there is no added overhead of a Pandas DataFrame. However, they are slower in offline environments when executed across many rows because they can not take advantage of vectorized operations. The opposite is true of Pandas transformations.

As a result, for any online application we generally recommend using Pandas for Batch and Stream Feature Views and Python for Realtime Feature Views, because Realtime Feature Views directly affect online serving latencies.

If your features are used strictly in a batch prediction use case, Tecton recommends using Pandas transformations everywhere.

Registering a Feature View

Feature Views are registered by adding a decorator (e.g. @batch_feature_view)

to a Python function. The decorator supports several parameters to configure the

Feature View.

The default name of the Feature View registered with Tecton will be the name of

the function. If needed, the name can be explicitly set using the name

decorator parameter.

The function inputs are retrieved from the specified sources in corresponding

order. Tecton will use the function pipeline definition to construct, register,

and execute the specified graph of transformations.

Defining the Feature View Function

A Feature View defines one transformation function that is executed when the Feature View runs. It runs against data retrieved from external data sources. For details, see the Transformations section.

Interacting with Feature Views

The Tecton SDK provides a set of methods that allow you to interactively test features or generate training data in any Python environment, such as a Python notebook. See Reading Feature Data for details.

What's Next

Begin creating Feature Views:

Create a Feature Service to serve the features defined in your Feature Views.